10 CRO Experts Reveal Their Worst A/B Testing Mistakes

You’ve read the blog posts and you’ve heard from the vendors. A/B testing is a lot more difficult than you can imagine, and you can unintentionally wreak havoc on your online business if you aren’t careful.

Fortunately, you can learn how to avoid these awful A/B testing mistakes from 10 CRO experts. Here’s a quick look at some of their greatest pitfalls:

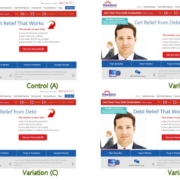

Joel Harvey, Conversion Sciences Worst A/B Testing Mistake

“Because of a QA breakdown we didn’t notice that the last 4-digits of one of the variation phone numbers displayed to visitors was 3576 when it should have been 3567. In the short time that the offending variation was live, we lost at least 100 phone calls.”

Peep Laja, ConversionXL Worst A/B Testing Mistake

“Ending tests too early is the #1 mistake I see. You can’t “spot a trend”, that’s total bullshit.”

Craig Sullivan, Optimise or Die Worst A/B Testing Mistake

“When it comes to split testing, the most dangerous mistakes are the ones you don’t realise you’re making.”

Alhan Keser, Widerfunnel.com Worst A/B Testing Mistakes

“I had been allocated a designer and developer to get the job done, with the expectation of delivering at least a 20% increase in leads. Alas, the test went terribly and I was left with few insights.”

Andre Morys, WebArts.de Worst A/B Testing Mistake

“I recommend everybody to do a cohort analysis after you test things in ecommerce with high contrast – there could be some differences…”

Ton Wesseling, Online Dialogue Worst A/B Testing Mistake

“People tend to say: I’ve tested that idea – and it had no effect. YOU CAN NOT SAY THAT! You can only say – we were not able to tell if the variation was better. BUT in reality it can still be better!”

John Ekman, Conversionista Worst A/B Testing Mistake

“AB-testing is not a game for nervous business people, (maybe that’s why so few people do it?!). You will come up with bad hypotheses that reduce conversions!! And you will mess up the testing software and tracking.”

Paul Rouke, PRWD Worst A/B Testing Mistake

“One of the biggest lessons I have learnt is making sure we fully engage, and build relationships with the people responsible for the technical delivery of a website, right from the start of any project.”

Matt Gershoff, Conductrics Worst A/B Testing Mistake

“One of the traps of testing is that if you aren’t careful, you can get hung up on just seeing what you DID in the past, but not finding out anything useful about what you can DO in the future.”

Michael Aagaard, ContentVerve.com Worst A/B Testing Mistakes

“After years of trial and error, it finally dawned on me that that the most successful tests were the ones based on data, insight and solid hypotheses – not impulse, personal preference or pure guesswork.”

Don’t start your next search marketing campaign without the guidance of our free report. Click here to download How 20 Search Experts Beat Rising Costs.

- Seasonal Online Retailers’ Optimization Habits - August 17, 2015

- The Conversion Optimization Tools Your Competition Will Dominate With [AUDIO] - June 11, 2015

- 10 CRO Experts Reveal Their Worst A/B Testing Mistakes - January 26, 2015

Trackbacks & Pingbacks

[…] 10 CRO Experts Reveal Their Worst A/B Testing Mistakes, conversionscientist.com […]

[…] 10 CRO Experts Reveal Their Worst A/B Testing Mistakes, conversionscientist.com […]

[…] 10 CRO Experts Reveal Their Worst A/B Testing Mistakes, conversionscientist.com […]

[…] 10 CRO Experts Reveal Their Worst A/B Testing Mistakes, conversionscientist.com […]

Leave a Reply

Want to join the discussion?Feel free to contribute!